AI-Assisted Attacks in 2026: Why Cyber Defence Must Move Beyond Traditional Patching

Artificial intelligence has not created cybercrime from scratch. What it has changed is the operating model.

For years, technically capable attackers had an advantage because exploitation, malware development, infrastructure automation and post-compromise analysis required experience. In 2026, that gap is narrowing. Large language models and agentic coding tools are reducing the skill barrier, compressing attack timelines and allowing smaller groups, and in some cases low-skilled individuals, to execute work that previously required a more organised technical team.

This is not a theoretical shift. Anthropic reported that cybercriminals have already attempted to use Claude Code across multiple stages of real-world operations, including reconnaissance, credential harvesting, network penetration, stolen data analysis and extortion preparation. In one reported case, a threat actor targeted at least 17 organisations using AI-supported workflows.

At the same time, Google Cloud’s Mandiant is careful to frame the risk correctly: most successful intrusions are still rooted in familiar security failures such as exposed systems, weak identity controls, poor visibility and delayed remediation. AI is not replacing those fundamentals. It is accelerating the consequences when those fundamentals are weak.

The software supply chain is becoming the attacker’s preferred operating layer

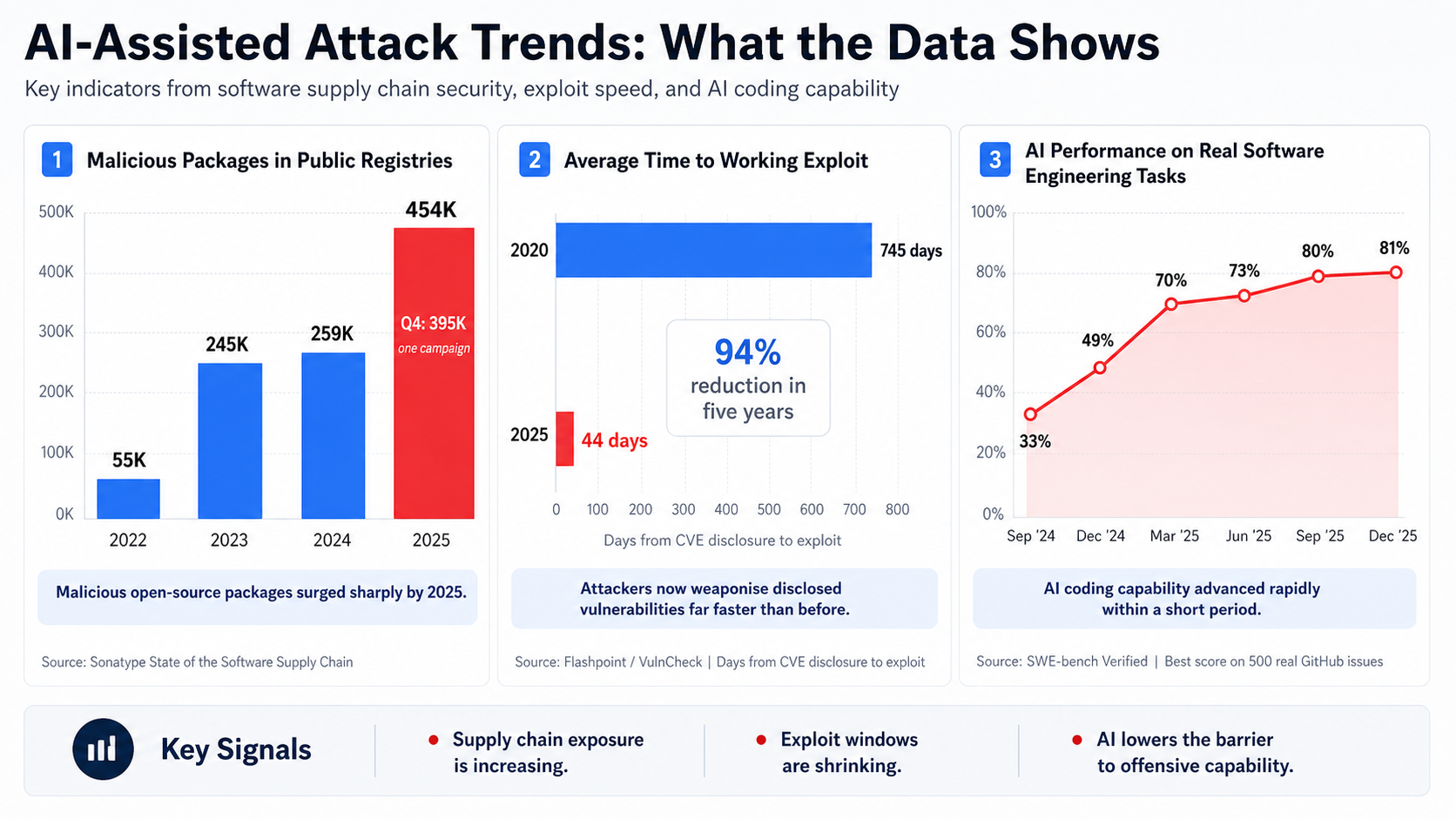

The most visible indicator is the growth of malicious packages in public registries.

According to Sonatype’s 2026 State of the Software Supply Chain data, more than 454,600 new malicious packages were identified throughout 2025, bringing the cumulative total of known and blocked open source malware to over 1.233 million packages across ecosystems including npm, PyPI, Maven Central, NuGet and Hugging Face. Sonatype also notes that more than 99% of open source malware activity in 2025 occurred on npm.

This matters because modern software delivery depends heavily on public packages, CI/CD pipelines, third-party libraries and developer tooling. Attackers no longer need to break directly into production first. They can attempt to compromise the path that feeds production.

The risk is structural:

An apparently legitimate package can contain malicious logic.

A trusted maintainer account can become the distribution point.

A CI/CD token can become the bridge into downstream environments.

A dependency update can become a supply chain event.

A poisoned package can reach developer workstations, build systems and production artefacts before traditional security controls understand what happened.

The practical takeaway is clear: software supply chain security can no longer be treated as a developer-side hygiene task. It is now an enterprise risk domain.

The exploit window is collapsing faster than enterprise patch cycles

The second major shift is speed.

Flashpoint’s research on n-day vulnerability trends shows that the average Time to Exploit dropped from 745 days in 2020 to 44 days in 2025. In the same analysis, Flashpoint states that n-days represented over 80% of Known Exploited Vulnerabilities tracked across the previous four years.

This changes the economics of vulnerability management. For many organisations, monthly patching cycles were designed around an older assumption: that there was a workable grace period between disclosure and exploitation. That assumption is now unsafe.

The gap becomes even more visible when compared with remediation performance. Edgescan’s 2025 Vulnerability Statistics Report identified an average remediation time of 74.3 days for high and critical severity findings, while larger enterprises with more than 1,000 employees left an average of 45.4% of vulnerabilities unresolved within a 12-month period.

In plain terms: attackers are moving in weeks, sometimes hours, while many organisations still remediate in months.

VulnCheck’s 2026 State of Exploitation analysis adds further pressure to this picture. Its research found that 28.96% of Known Exploited Vulnerabilities observed in 2025 were exploited on or before the day their CVE was published.

For internet-facing assets, identity systems, VPNs, firewalls, application gateways, SaaS integrations and exposed APIs, this means vulnerability management must become exposure-driven, not spreadsheet-driven.

Why AI changes the economics of cyber offence

The core issue is not that AI “hacks by itself” in every case. The more realistic risk is that AI helps attackers move faster across the attack chain.

A less-skilled operator can use AI to understand error messages, generate scripts, automate reconnaissance, summarise stolen files, adapt phishing language, troubleshoot malware logic or chain together public tooling. A more capable attacker can use the same technology to scale operations, test more variants and reduce manual effort.

SWE-bench Verified, a benchmark based on real GitHub issues, became one of the visible indicators of how quickly AI coding capability was improving. Epoch AI describes the benchmark as a human-validated subset designed to evaluate whether models can solve real-world software engineering issues.

There is a necessary caveat: OpenAI has argued that SWE-bench Verified is increasingly unsuitable for measuring frontier coding performance because of benchmark contamination and test limitations. That nuance matters. Still, the broader direction is not in doubt: coding agents have become materially more capable, and that capability benefits defenders and attackers at the same time.

For defenders, the strategic question is no longer “Can AI generate code?” It is “How do we secure an environment where code, scripts, payloads, infrastructure and attack variations can be produced at machine speed?”

Why traditional defence models are under pressure

Most organisations are still structured around a reactive sequence:

Discover the vulnerability.

Validate the finding.

Prioritise it.

Assign it to an owner.

Wait for a maintenance window.

Patch it.

Retest it.

Close the ticket.

That process is not wrong. It is simply too slow for the current threat tempo when applied without risk context.

The real operational problem is that security teams are being asked to handle three accelerations at once:

More vulnerabilities are being disclosed.

More malicious packages are entering public ecosystems.

More attackers can use AI to automate parts of the attack lifecycle.

The result is not just a higher alert volume. It is a higher decision burden. Teams must decide faster, with better context, and with less tolerance for blind spots.

The required shift: from chasing attacks to deleting attack paths

The next phase of cyber defence should not be built only around “detect everything faster”. That is important, but it is not enough.

The stronger approach is to remove entire classes of attack wherever possible.

For software supply chain security, this means:

Using private registries and approved package sources.

Enforcing dependency allowlists for critical environments.

Applying Software Composition Analysis continuously, not only before release.

Generating and maintaining SBOMs for production applications.

Monitoring package reputation, maintainer changes and suspicious version behaviour.

Using signed artefacts, provenance checks and tamper-resistant build pipelines.

Reducing long-lived secrets in CI/CD workflows.

Blocking direct production dependency pulls from untrusted public registries.

For vulnerability management, this means:

Prioritising by exploitability, exposure and business criticality rather than CVSS alone.

Treating CISA KEV, VulnCheck KEV, EPSS, asset exposure and compensating controls as part of one decision model.

Creating emergency patch paths for internet-facing and identity-adjacent assets.

Reducing remediation SLAs for actively exploited vulnerabilities to hours or days, not weeks.

Validating whether a vulnerable asset is actually reachable from attacker-controlled paths.

For detection and response, this means:

Extending log retention beyond short operational windows.

Centralising logs from applications, network devices, identity providers, SaaS platforms, CI/CD systems and cloud workloads.

Moving from static IOC-only detection to behavioural anomaly detection.

Monitoring abnormal API usage, token activity, package installation behaviour and privilege escalation patterns.

Treating low-severity alerts on edge devices and identity systems as possible early-stage compromise indicators.

Google Cloud’s Mandiant also highlights the importance of expanded visibility, longer log retention and behavioural detection, particularly as attackers target edge devices and environments that often lack traditional endpoint telemetry.

What this means for CISOs and security leaders

AI-assisted attacks should not push organisations into panic buying. The immediate need is not more disconnected tooling. The immediate need is security architecture discipline.

A mature response should focus on five priorities.

First, reduce the trusted attack surface. Every dependency, token, integration, SaaS connector and build pipeline step should be treated as a potential trust boundary.

Second, make exploitability the centre of vulnerability management. A critical vulnerability on an isolated lab asset is not the same as a medium-severity flaw on an internet-facing authentication service.

Third, secure the developer workflow. Developer machines, package managers, build runners, source control systems and CI/CD secrets are now high-value targets.

Fourth, strengthen application and API protection. WAAP, API security, virtual patching and runtime protection are becoming essential controls when remediation cannot happen immediately.

Fifth, improve operational visibility. Without logs, telemetry and historical context, organisations cannot understand whether a fast-moving attack was blocked, missed or already successful.