What Is NXLog? A Flexible, Scalable, and Vendor-Agnostic Approach to Modern Log Collection and Management

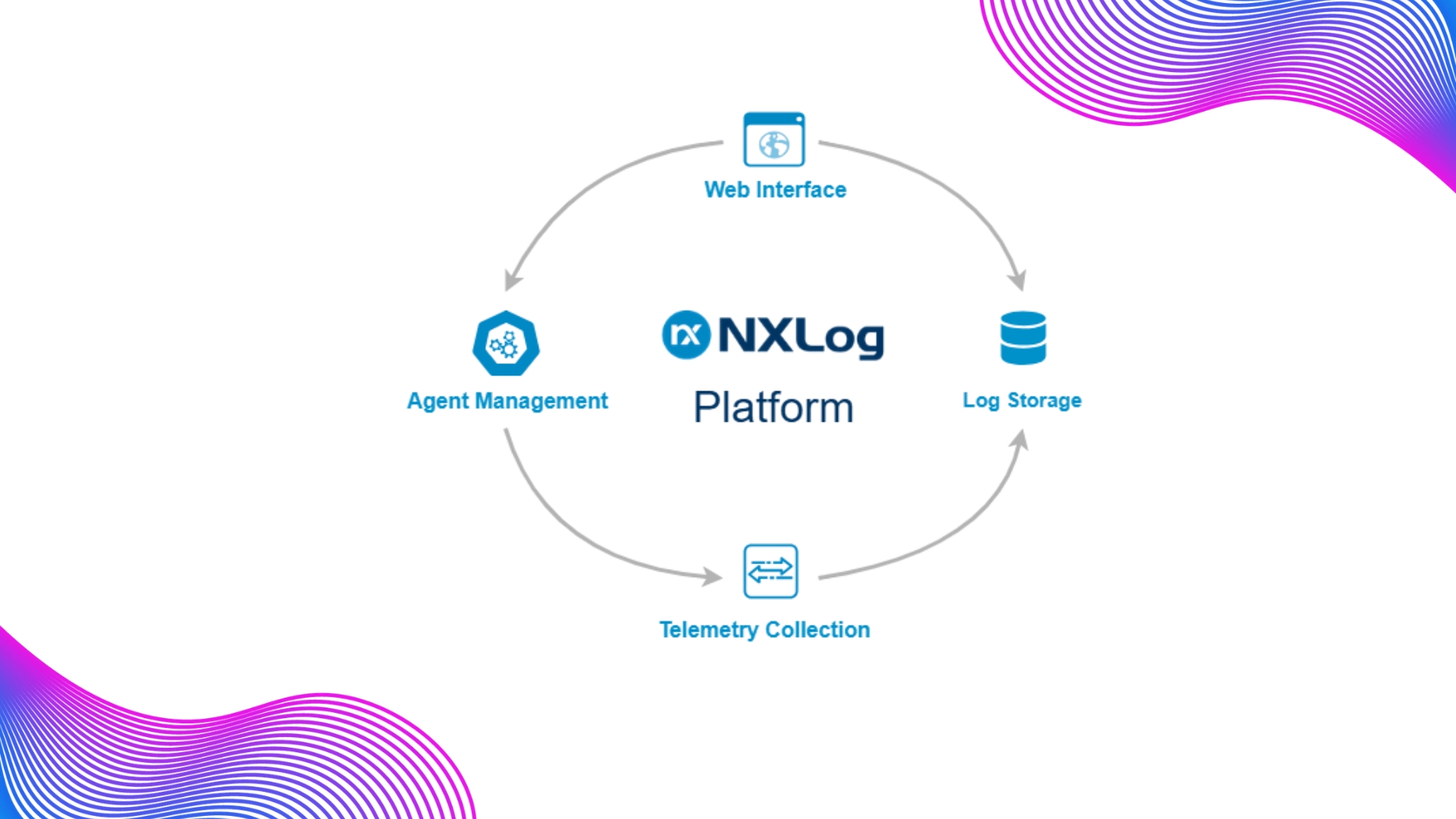

One of the most critical requirements in enterprise IT and cybersecurity operations is the ability to manage log data generated by diverse sources in a centralized, sustainable, and operationally meaningful way. NXLog is a platform built precisely for this purpose, offering the ability to collect, process, normalize, and route logs, metrics, and telemetry data to a wide range of destination systems. It stands out particularly in heterogeneous environments where Windows, Linux, network devices, security tools, cloud services, and OT infrastructures coexist. At its core, NXLog is designed to support both agent-based and agentless collection models, optimize data close to the source, and deliver telemetry to SIEMs, data lakes, analytics platforms, and observability tools without creating vendor lock-in.

From a CyberDistro perspective, describing NXLog merely as a “log forwarder” would be an oversimplification. A more accurate positioning is to view it as a centralized log management and data routing layer. NXLog does far more than collect logs. It also supports critical operational functions such as parsing, filtering, enrichment, transformation, normalization, and retransmission in multiple formats. This approach provides a significant operational advantage, especially for organizations seeking to strengthen SOC, NOC, compliance, and centralized monitoring capabilities.

NXLog’s Core Value Proposition

One of NXLog’s greatest strengths is that it does not force organizations into a single, rigid data collection model. According to its architecture and deployment philosophy, the platform can collect logs, metrics, and traces through both agent-based and agentless methods. This enables organizations to avoid deploying agents on every single asset while still achieving deeper visibility wherever agents are required. In large-scale environments, this hybrid capability becomes especially important from both an architectural and operational standpoint.

In practical terms, this means that in some systems, running a local agent is the most effective and technically accurate approach, while in others, remote collection is more realistic due to security policies, administrative restrictions, or technical limitations. Because NXLog can bring these two models together within the same architecture, it becomes a particularly strong fit for complex, multi-layered enterprise environments.

What Features Does NXLog Offer?

NXLog’s key capabilities can be grouped into several major areas.

First, it offers broad support for sources and formats. The platform is designed to work with Windows Event Log, syslog, file-based logs, selected integrations, and a wide range of data formats. On the syslog side, it can collect, parse, and route various syslog formats. Likewise, it provides parsing capabilities for structured data formats such as JSON, XML, and CSV.

Second, its data processing layer is highly capable. NXLog Agent can parse incoming records and prepare them for filtering, field extraction, rewriting, enrichment, and normalization. Its architecture supports functions such as JSON/XML parsing, regular expression-based field extraction, string manipulation, and mapping data into a common taxonomy. This enables logs from different vendors and systems to be transformed into a more consistent and analysis-ready format.

Third, NXLog provides strong routing and integration flexibility. Its platform model is built to transfer data efficiently to SIEMs, APM platforms, security analytics solutions, and data lake environments. This allows organizations to treat the log collection layer as an independent service rather than tying it directly to a single analytics or storage platform.

Fourth, scalability and high-volume data processing are among its notable strengths. NXLog’s multi-threaded and event-driven architecture is designed to handle high log volumes, parallel input streams, and complex processing scenarios. This is particularly valuable in environments generating high EPS, where performance and efficiency are critical.

Deployment Models: NXLog Is Not Limited to a Single Implementation Approach

One of the most important considerations when evaluating NXLog is its deployment flexibility. No two organizations have identical infrastructures, and log collection should not be forced into a one-size-fits-all model. NXLog addresses this by supporting a broad range of deployment approaches, including centralized management, distributed collection, relay architectures, and combinations of agent-based and agentless collection.

In practice, the following deployment models are especially relevant for NXLog:

1. Agent-Based Deployment

In this model, NXLog Agent is installed directly on the system generating the logs. The agent reads logs locally from the source, parses them, filters them, normalizes them, and forwards them to a centralized destination. This approach delivers deeper visibility, greater control over data processing, and better optimization close to the source. It is often the most effective model for Windows Event Logs, local file-based logs, and detailed application logs.

2. Agentless Deployment

In this model, no agent is installed on the log source itself. Instead, NXLog receives or collects logs remotely over the network using supported protocols and collection methods. This may include transport mechanisms such as TCP, UDP, and HTTP, as well as collection approaches such as syslog, WEC/WEF, WMI, and SNMP traps depending on the use case. This model is particularly valuable in environments where agent deployment is not feasible, where third-party devices are heavily used, or where operational constraints limit direct installation.

3. Hybrid Deployment

In real-world enterprise environments, this is often the most effective model. Agents can be installed on critical servers, while network devices, appliances, or closed systems can be monitored through agentless methods. This provides both depth and breadth of coverage. Because NXLog supports both agent-based and agentless collection, hybrid deployments can be implemented naturally and efficiently.

4. Relay and Load-Balanced Collector Architectures

In distributed infrastructures, concentrating all log traffic into a single collection point can create both performance and operational risks. NXLog addresses this through relay-based architectures, load balancing, and automatic failover scenarios. This demonstrates that the log collection layer can be designed not only for functionality, but also for resilience and scalability.

Agent-Based vs. Agentless Architectures: Which One Should Be Preferred?

One of NXLog’s key strengths is that it treats agent-based and agentless approaches not as competing models, but as complementary ones.

The agent-based model provides richer telemetry and more controlled processing because it has direct access to the log source. For example, when direct access to Windows Event Logs, local file-based logs, or source-side filtering is required, the agent model is typically the more accurate choice. In addition, because filtering and normalization can be applied before the data is forwarded centrally, bandwidth consumption and downstream storage or licensing costs can also be optimized.

The agentless model, on the other hand, offers operational simplicity and broader compatibility. It is often more practical for network devices, security appliances, OT systems, legacy infrastructures where agents cannot be installed, or segments with restricted administrative access. Typical examples include syslog-enabled devices, network equipment generating SNMP traps, and Windows environments using WEF/WEC-style remote collection.

The core decision point is straightforward: do you need maximum visibility, or do you need broad coverage with minimal intervention? Because NXLog supports both requirements under a single product framework, organizations can reduce unnecessary tool fragmentation during architectural design.

How Does NXLog Approach the Log Collection Process?

NXLog’s model is more mature than a simple “collect and forward” approach. A better way to understand it is to break the process into five distinct stages.

The first stage is data ingestion. Logs can be read from files, collected from Windows Event Log, received as syslog, or gathered via agentless protocols. This flexibility makes it possible to bring together a wide variety of source types within a single collection layer.

The second stage is parsing. Raw log data is rarely clean or structured enough for direct analysis. NXLog addresses this by parsing the data, extracting fields, and making the log content machine-readable. It supports ready-to-use parsing mechanisms for formats such as JSON, XML, CSV, and syslog, while also allowing more advanced handling through NXLog’s own language and regex-based methods.

The third stage is processing and enrichment. At this stage, logs can be filtered, unwanted records can be discarded, new fields can be added, content can be rewritten, and events can be prepared for the target system. In environments where SIEM costs scale with data volume, source-side filtering and transformation can create substantial cost optimization. NXLog’s normalization capabilities play a central role here.

The fourth stage is normalization. Converting logs from different vendors into a common schema is essential for improving correlation quality and analytical consistency. NXLog is designed to address this by mapping parsed data into a common field structure and reproducing it in the target format required downstream.

The fifth and final stage is routing and forwarding. Once processed, the data can be sent to a SIEM, a centralized log server, a data lake, or another analytics platform. This is where NXLog’s value proposition becomes especially clear: the objective is not simply to collect data, but to deliver the right data, in the right format, to the right destination.

Why Is NXLog Important from a Log Management Perspective?

In enterprise environments, log management is not solely a security function. Application teams, infrastructure teams, compliance teams, and audit processes all depend on reliable and structured log data. NXLog’s centralized approach makes it possible to address these different requirements within the same operational framework.

For example, security teams may need to collect data from endpoints and security products, while operations teams want centralized visibility into system and network logs. Compliance teams, meanwhile, are more focused on consistency, retention policies, and auditability. NXLog’s centralized log management, data processing, and routing model can unify these needs within a shared telemetry pipeline. This becomes even more valuable when governance-related requirements such as role-based access control, audit trails, retention management, and data masking are also part of the broader strategy.

Key Use Cases

NXLog is particularly well positioned in the following scenarios:

Centralized Log Collection Projects

For organizations that want to aggregate dispersed system logs into a single point of control, NXLog provides a strong collection and routing layer. This is especially relevant in environments where Windows, Linux, and network devices operate together.

SIEM Pre-Processing

In scenarios where raw logs should not be sent directly into a SIEM, NXLog can deliver significant value by filtering, normalizing, and enriching events beforehand. This improves both analytical quality and data volume efficiency.

Environments with Limited Agent Deployment

In infrastructures where deploying agents is difficult or not possible, such as network devices, third-party appliances, legacy systems, or OT segments, NXLog can extend visibility through agentless collection models.

Large-Scale Deployments

With support for centralized agent management, relay clusters, load balancing, and failover, NXLog can support scalable architectures across high-volume, multi-location environments.

Windows Log Management

NXLog is also particularly relevant in Windows-heavy enterprise environments, where both agent-based Windows Event Log collection and remote collection approaches such as WEC/WEF can be leveraged together for more flexible deployment.